The End of "Press 1 for Sales"

Traditional IVR systems have been the front door of call centers for decades, and callers have always hated them. Rigid menu trees, limited recognition vocabulary, and the constant frustration of shouting "AGENT" into the phone have made IVR one of the most despised technologies in customer service. According to industry surveys, over 60% of callers attempt to bypass IVR menus entirely within the first few seconds.

AI powered voice bots change this equation completely. Instead of forcing callers through a predetermined tree, a voice bot listens to natural speech, understands intent, and responds conversationally. When built correctly, the experience feels like speaking with a knowledgeable human agent rather than wrestling with a machine.

But "built correctly" is doing a lot of heavy lifting in that sentence. At RG INSYS, we have spent years deploying voice bots on top of FreeSWITCH and Asterisk for call centers across India and internationally. The gap between a demo that impresses stakeholders and a production system that handles thousands of concurrent calls reliably is enormous. This article covers the architecture, the pitfalls we have encountered, and the real world performance numbers from our deployments.

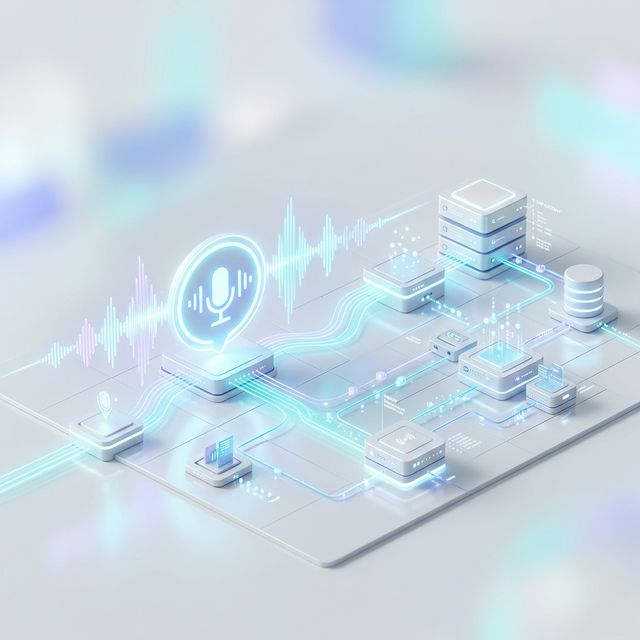

Core Architecture: Four Components Working in Concert

Every production voice bot consists of four fundamental layers. Getting each one right matters, but getting them to work together with minimal latency is where the real engineering challenge lies.

1. The Telephony Layer (FreeSWITCH or Asterisk)

The telephony layer handles SIP signaling, media streams, call routing, and integration with carriers. We use FreeSWITCH as our primary platform because of its superior media handling, native WebSocket support, and ability to fork audio streams to external processes without degrading call quality. Asterisk works well for smaller deployments, but FreeSWITCH scales more predictably under heavy concurrent call loads.

The telephony layer captures the raw audio from the caller (typically 8kHz or 16kHz PCM) and streams it to the speech recognition engine. It also receives synthesized audio back and plays it into the call. This bidirectional audio bridge must operate with absolute reliability because any glitch is immediately audible to the caller.

2. Speech to Text (STT)

The STT engine converts spoken audio into text in real time. We have worked with Google Cloud Speech, Azure Speech Services, Deepgram, and open source models like Whisper. The choice depends on language requirements, latency tolerance, and budget.

For Indian call centers handling multilingual traffic (Hindi, English, Tamil, Telugu, and code mixed speech), we typically use a combination of cloud STT for accuracy and locally hosted models for cost control on high volume lines. Streaming recognition is essential. Batch mode, where you wait for the caller to finish speaking before processing, adds unacceptable delay. The STT engine must return partial results as the caller speaks so downstream components can begin processing early.

3. Natural Language Understanding (NLU)

Once we have text, the NLU layer determines what the caller wants. This can be a custom trained intent classifier, a large language model with prompt engineering, or a hybrid of both. For well defined use cases like appointment scheduling, balance inquiries, or complaint logging, a focused intent classifier with entity extraction is faster and more reliable than a general purpose LLM.

For open ended conversations or complex troubleshooting flows, we integrate LLMs with retrieval augmented generation (RAG) to ground responses in the company's actual knowledge base. The NLU layer also manages dialog state, tracking where the conversation is, what information has been collected, and what the next step should be.

4. Text to Speech (TTS)

The TTS engine converts the bot's text response back into spoken audio. Modern neural TTS engines from providers like ElevenLabs, Azure Neural Voices, and Google WaveNet produce remarkably natural speech. We cache frequently used responses (greetings, hold messages, confirmations) as pregenerated audio files to eliminate synthesis latency for common utterances.

The Latency Budget: Every Millisecond Counts

In a phone conversation, humans expect a response within 300 to 800 milliseconds. Anything beyond one second feels unnatural, and beyond two seconds, callers assume the line has gone dead. Here is how we allocate the latency budget in a typical deployment:

- End of speech detection: 200 to 400ms (waiting to confirm the caller has finished talking)

- STT finalization: 100 to 200ms (final transcript after speech endpoint)

- NLU processing: 50 to 150ms (intent classification and response generation)

- TTS synthesis: 100 to 300ms (generating the first audio chunk)

- Network and media overhead: 50 to 100ms

Total: roughly 500 to 1150ms. Staying under one second requires aggressive optimization at every stage. We use streaming TTS where the first audio chunk begins playing while the rest is still being generated, and we overlap STT finalization with NLU processing using speculative execution on partial transcripts.

"The difference between a 600ms response and a 1400ms response is the difference between a caller who stays engaged and one who demands a human agent. Latency is the single most important metric in voice bot engineering."

Common Pitfalls and How to Solve Them

Echo Cancellation

When the bot's TTS audio leaks back into the microphone and gets picked up by the STT engine, the bot starts "hearing itself." This creates a feedback loop that completely derails the conversation. Proper acoustic echo cancellation (AEC) must be applied at the telephony layer. FreeSWITCH has built in echo cancellation, but in many carrier configurations, you need additional processing. We run a dedicated AEC module that subtracts the known TTS output from the incoming audio stream before it reaches the STT engine.

Barge In Handling

Callers frequently interrupt the bot mid sentence, especially when they already know what they want. The bot must detect this interruption immediately, stop speaking, and process the new input. This requires the STT engine to remain active even while TTS audio is playing, and the system must be able to cancel in progress TTS playback within 100ms of detecting caller speech. Poor barge in handling is one of the top reasons callers rate voice bots negatively.

Accent and Noise Challenges

Call center traffic includes callers speaking with diverse regional accents, often from noisy environments like streets, factories, or crowded offices. STT accuracy can drop by 15 to 25% in these conditions compared to clean studio audio. We address this with noise suppression preprocessing (using models like RNNoise), accent adapted STT models, and confidence thresholds that trigger clarification prompts ("I want to make sure I understood correctly. Did you say...?") rather than acting on uncertain transcriptions.

Graceful Handoff to Human Agents

No voice bot should attempt to handle 100% of calls. The system must recognize when it is failing and transfer the caller to a human agent smoothly. We implement handoff triggers based on multiple signals:

- Repeated low confidence scores from the STT or NLU engine (three consecutive uncertain interpretations)

- Caller frustration indicators such as raised voice, repeated phrases, or explicit requests for a human

- Conversation depth limits where the dialog exceeds a predefined number of turns without resolution

- Specific intent detection for categories that require human judgment (complaints, legal matters, sensitive account changes)

When a handoff occurs, the bot passes the full conversation transcript, detected intent, and any collected entities to the human agent's screen. The caller should never have to repeat information they already provided to the bot.

Real World Performance Metrics

Across our deployments for mid to large call centers (500 to 5,000 daily calls), we consistently observe these results after the first 90 days of optimization:

- Call containment rate: 45% to 65% of calls fully resolved by the bot without human intervention

- Average handle time reduction: 30% to 40% decrease for calls that do reach agents, because the bot pre collects information

- First call resolution improvement: 12% to 18% increase, because the bot routes callers to the right department with context

- Caller satisfaction (CSAT): Typically neutral to positive after initial tuning. Callers who get fast resolution rate the bot higher than traditional IVR

- Cost per interaction: 60% to 70% lower than fully human handled calls

These numbers improve steadily over time as we analyze failed conversations and retrain the NLU models on real call data.

Scaling: Cloud, On Premise, or Hybrid

Scaling a voice bot system means handling more concurrent calls without degrading latency or accuracy. The telephony layer (FreeSWITCH) scales horizontally behind a Kamailio load balancer. We typically deploy Kamailio as the SIP proxy, distributing calls across multiple FreeSWITCH instances based on current load.

For STT and TTS, cloud services scale effortlessly but introduce network latency and ongoing costs. On premise GPU servers running open source models (Whisper for STT, Piper or Coqui for TTS) eliminate per call API costs and keep audio data within the client's infrastructure, which matters for regulated industries like banking and healthcare.

Our recommended architecture for most clients is a hybrid model: FreeSWITCH and Kamailio on premise (or in the client's private cloud) for telephony and call control, with STT and TTS services chosen based on volume, language requirements, and compliance constraints. The NLU layer runs on standard CPU instances and scales easily with container orchestration.

Why We Build on FreeSWITCH at RG INSYS

At RG INSYS, FreeSWITCH is the backbone of our voice bot deployments. Its mod_audio_fork module allows us to tap into live call audio streams and route them to external AI services via WebSockets with minimal overhead. Combined with our custom Kamailio configurations for intelligent call routing and our experience tuning STT models for Indian English and regional languages, we deliver voice bot systems that work reliably in production, not just in demos.

We have learned through dozens of deployments that the technology stack matters less than the integration engineering. Getting FreeSWITCH, STT, NLU, and TTS to work together within a tight latency budget, while handling the messy realities of telephony audio, is where most projects fail. That operational expertise is what we bring to every engagement.

Related Articles

- FreeSWITCH vs Asterisk in 2026: Which Should You Choose?

- Adding AI to Your Existing Product Without Rewriting It

- RAG vs Fine Tuning: Which Approach Should You Choose?

Planning a voice bot for your call center?

Get a free scope, timeline, and cost estimate within 48 hours. No commitment required.

Book a Free Consultation →